Yunqi Hong

yunqihong@ucla.edu

I am a third-year PhD student in the Computer Science Department at UCLA, advised by Prof. Cho-Jui Hsieh.

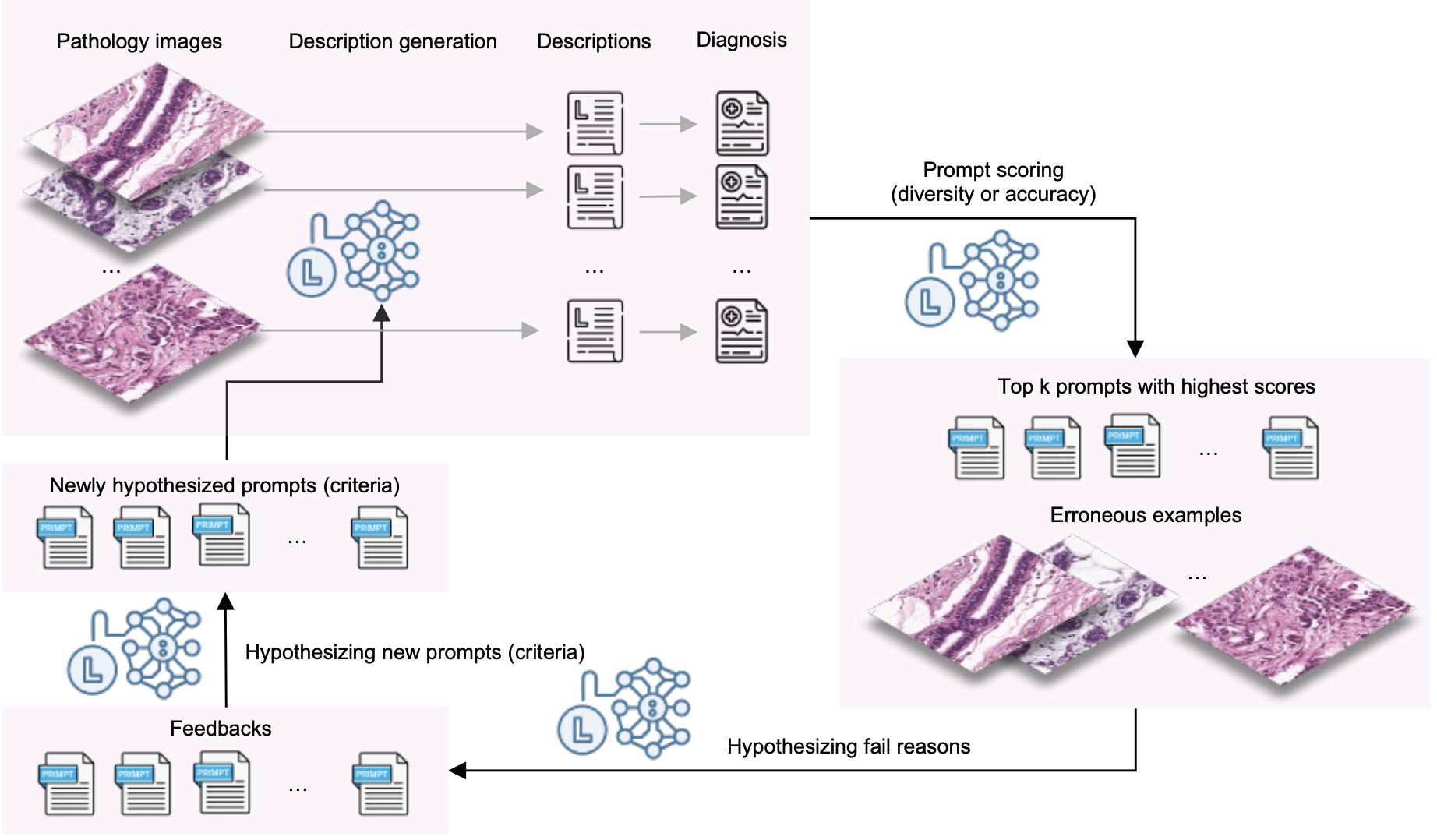

My research focuses on LLM post-training and multimodal reasoning. I am currently working on LLM reinforcement learning, reward modeling, SFT data curation, and Regression-aware LLM finetuning. I am particularly interested in understanding and mitigating failure modes in optimization, such as reward hacking, distribution shift, and evaluation bias, and in designing scalable methods that improve model performance using limited or unlabeled data. Previously, I explored topics in Text-to-Image RL, LLM automatic prompt optimization, model attribution, scalable graph adversarial attacks, graph representation learning, and recommender systems.

I also collaborate with Prof. Neil Y.C. Lin on interdisciplinary projects, including applying LLM-driven methods to biomedical research, such as medical image analysis and drug prediction with LLMs.

news

| Apr 30, 2026 | Our paper When Distance Distracts: Representation Distance Bias in BT-Loss for Reward Models is accepted by ICML 2026. |

|---|---|

| Jan 06, 2026 | Our new paper Understanding Reward Hacking in Text-to-Image Reinforcement Learning is out, uncovering how reward design leads to artifact exploitation in T2I RL; code is available on Github. |

| Sep 18, 2025 | Our paper on boosting fine-grained zero-shot performance of MLLMs with unlabeled data has been accepted at NeurIPS 2025. |